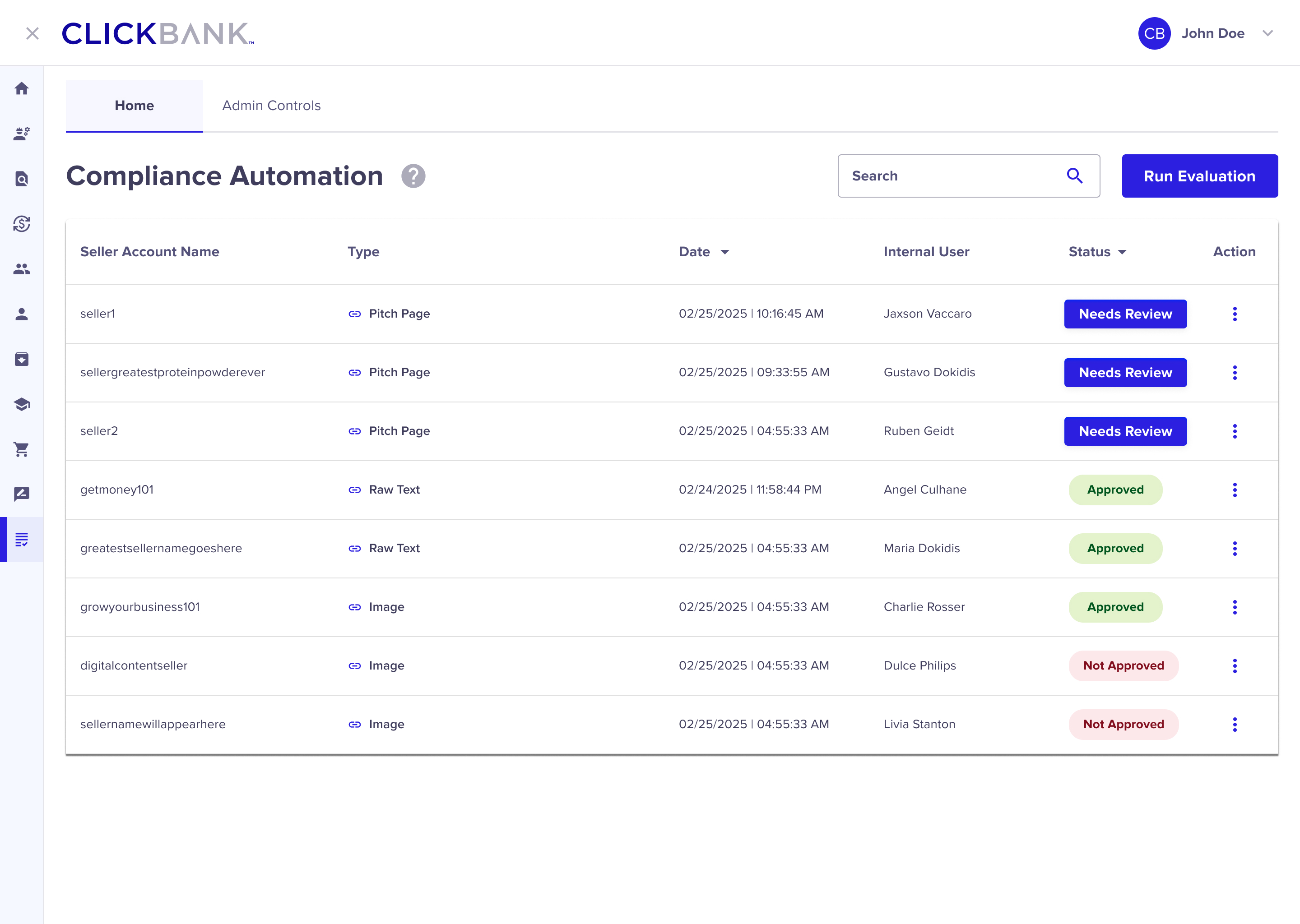

ClickBank’s compliance team plays a critical role in maintaining platform trust, ensuring that seller storefronts, claims, and documentation meet strict policy requirements. However, the review process was highly manual, time-intensive, and difficult to scale as submission volume increased.I led the design of an internal AI-assisted compliance platform that partnered human judgment with large language models to dramatically accelerate reviews—while maintaining clarity, control, and explainability for internal teams and sellers alike.

Compliance reviews required deep attention to detail across multiple inputs, including:

Seller storefronts (pitch pages).

Site imagery and marketing claims.

Product labels and certificates of insurance.

Each review could take one to two days, often involving repetitive tasks like extracting claims, validating required fields, and documenting rejection reasons. The process was accurate but slow—and increasingly difficult to scale.

The key challenge wasn’t automation alone, but how to safely introduce AI into a high-risk, policy-driven workflow without removing human accountability.

I led this initiative as the lead product designer, owning research, workflow design, interaction patterns, and AI-human collaboration models. I partnered closely with compliance leadership, engineering, and legal stakeholders to ensure the system improved efficiency without compromising policy interpretation or decision quality.

Through stakeholder interviews, workflow mapping, and review audits, several systemic opportunities emerged across compliance reviews. Including storefronts (pitch pages), claims, imagery, and certificates of insurance.

Compliance reviewers spent a disproportionate amount of time manually extracting and validating information across storefronts, images, product labels, and COIs. This included identifying marketing claims, verifying required fields, and cross-checking documents—work that was repetitive, time-intensive, and difficult to scale as submission volume increased.

Rejection feedback varied by reviewer and artifact type, often requiring additional time to document failures and explain next steps. For COIs and labels, missing details such as expiration dates or batch numbers were easy to overlook and slow to communicate, leading to avoidable resubmissions and extended review cycles.

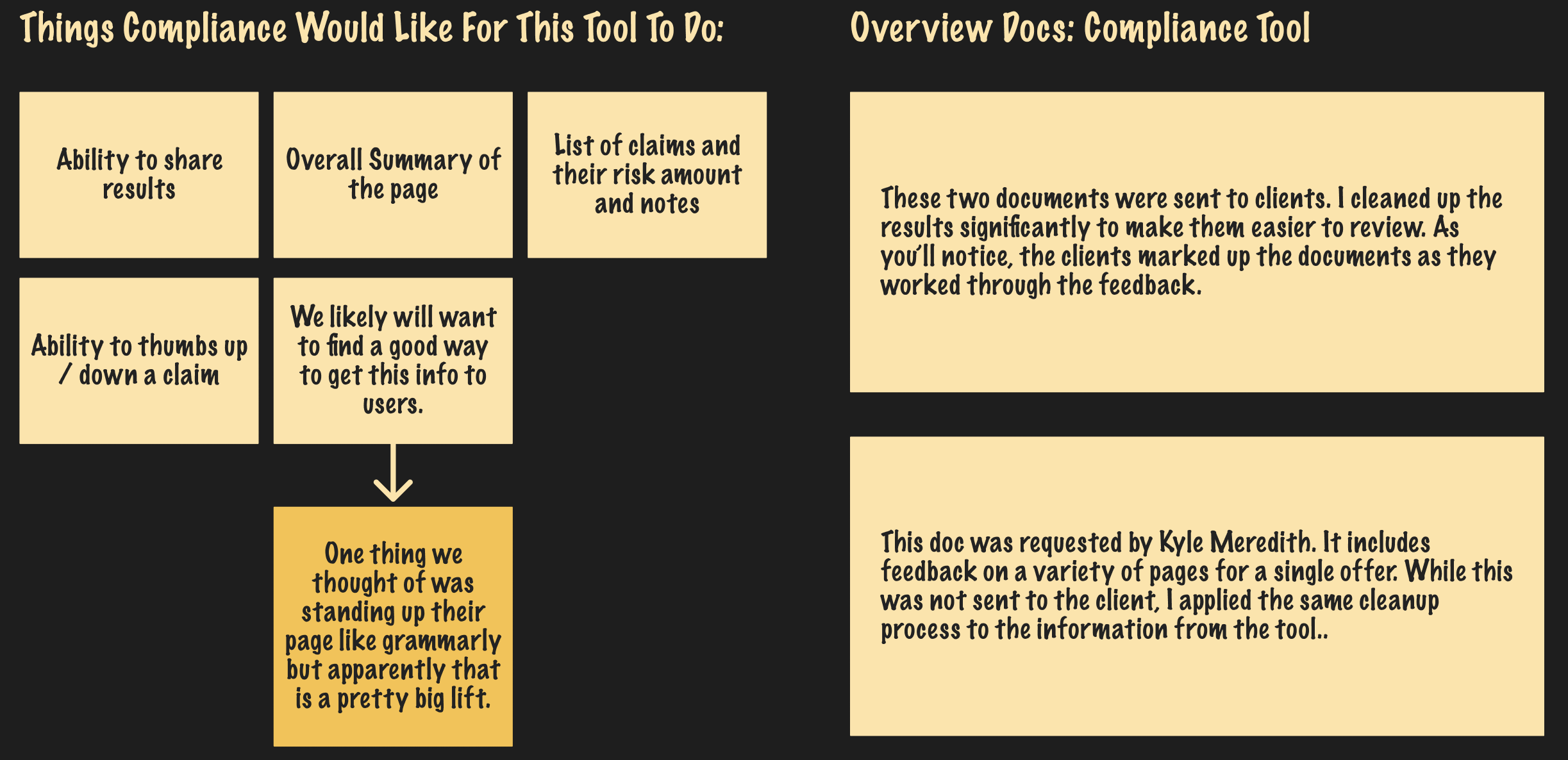

While AI showed promise in accelerating reviews, reviewers needed confidence and control before relying on it in a high-risk, policy-driven environment. Any AI-assisted solution had to be transparent, editable, and explainable—supporting decisions rather than replacing human judgment.

Key Insight: AI should assist and explain—not decide.The opportunity wasn’t full automation, but designing a human-in-the-loop system where AI reduced cognitive load, surfaced risks, and generated consistent outputs while reviewers retained ownership of final decisions.

Rather than positioning the LLM as an authority, the platform was intentionally designed to support, learn from, and defer to compliance reviewers. The goal was to reduce cognitive load and accelerate reviews—while ensuring humans retained full ownership of interpretation, judgment, and final decisions. Over time, reviewer input helped guide and refine the model’s outputs, improving accuracy and trust.

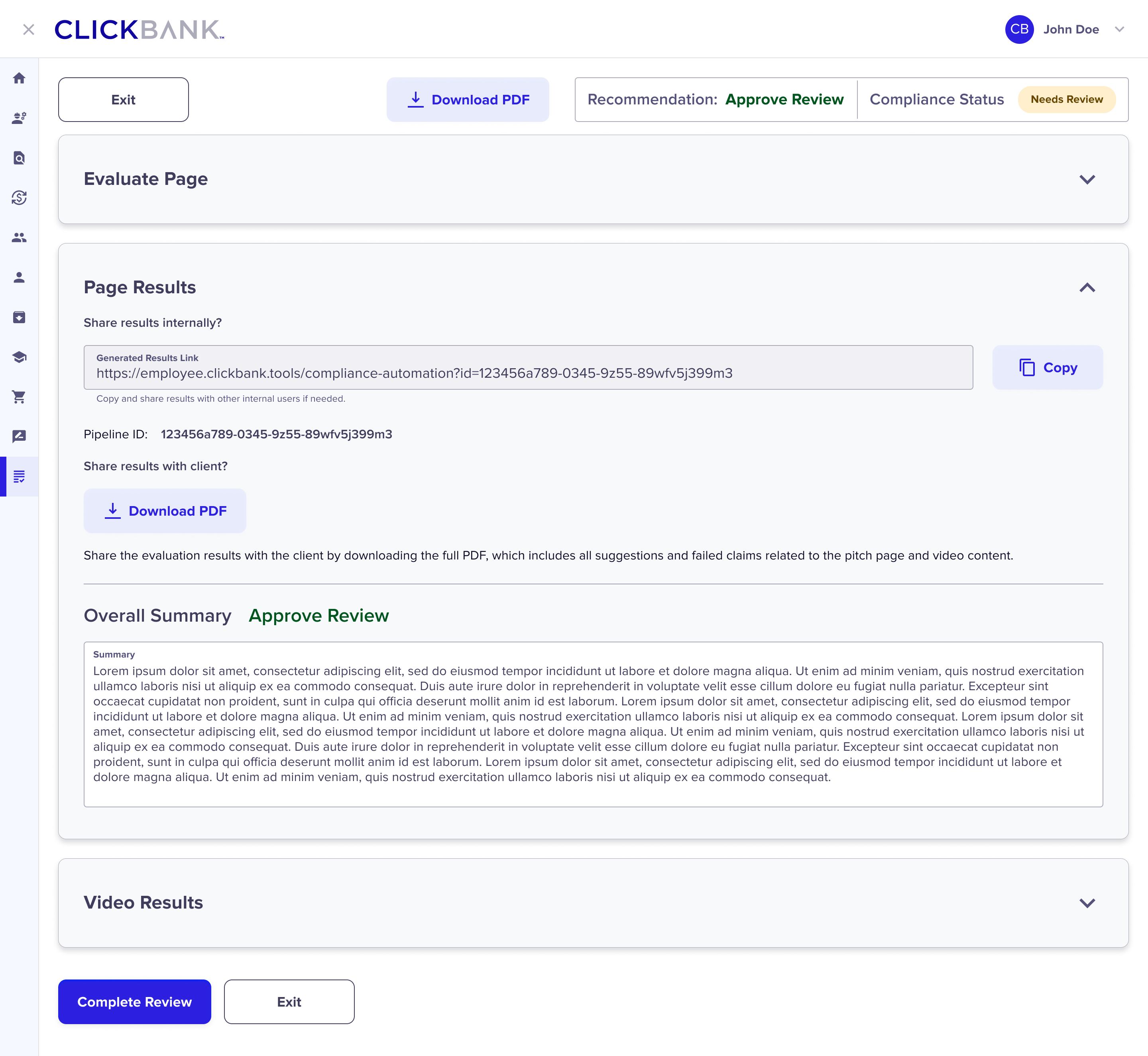

The system made AI reasoning visible by clearly surfacing detected claims, flagged risks, and the policy rules influencing each recommendation. Reviewers could see what the model identified and why, enabling faster validation and preventing black-box decision making in a high-risk compliance environment.

AI-generated outputs—such as claim evaluations, suggested rewrites, or rejection explanations—were fully editable by reviewers. This allowed compliance experts to correct nuance, apply contextual judgment, and ensure final messaging aligned with policy intent, reinforcing trust and accountability at every step.

The platform standardized how claims, storefronts, images, labels, and COIs were evaluated and communicated, reducing variability across reviews. At the same time, it preserved flexibility for edge cases, allowing reviewers to apply policy nuance without sacrificing clarity or speed.

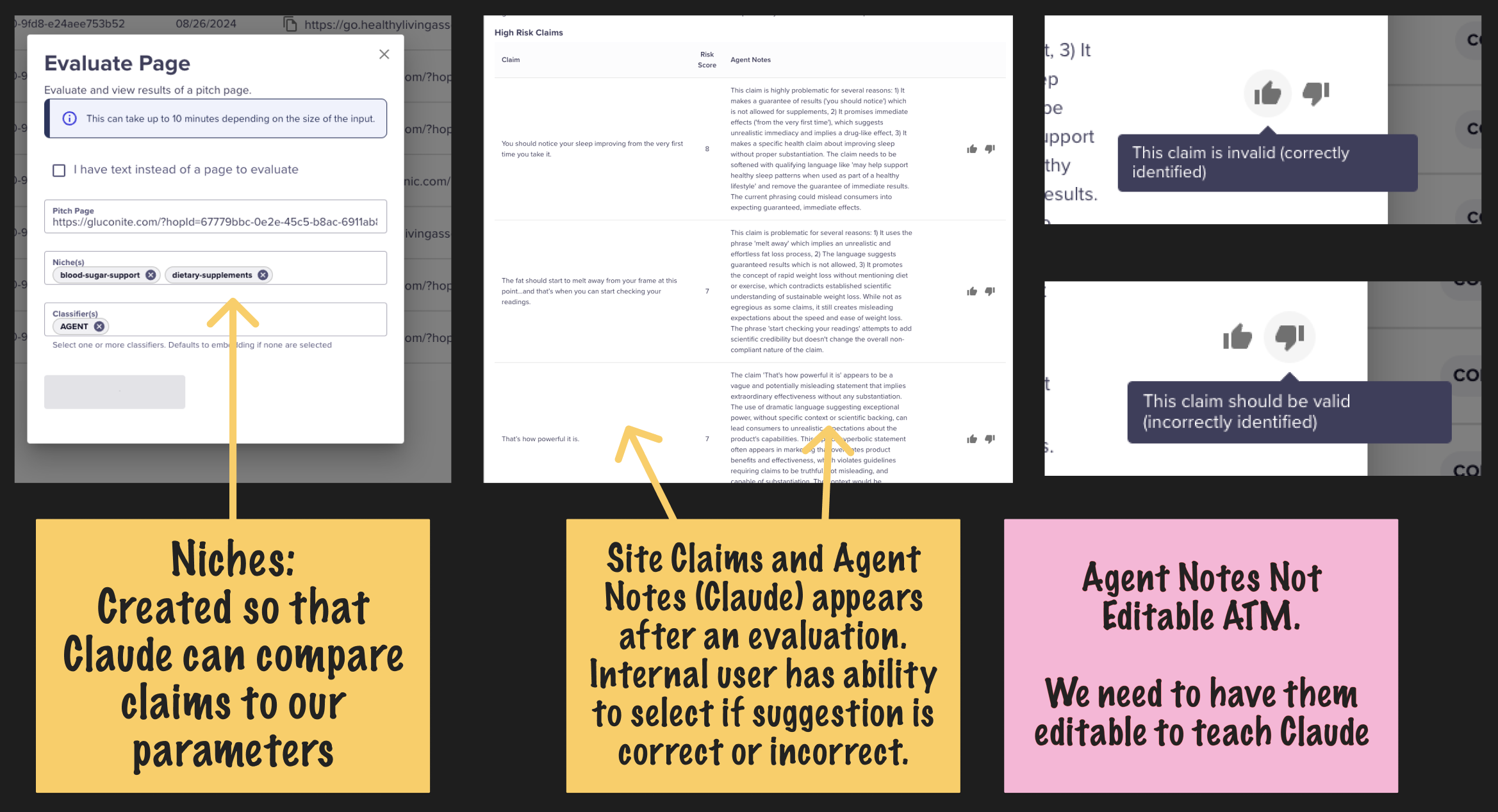

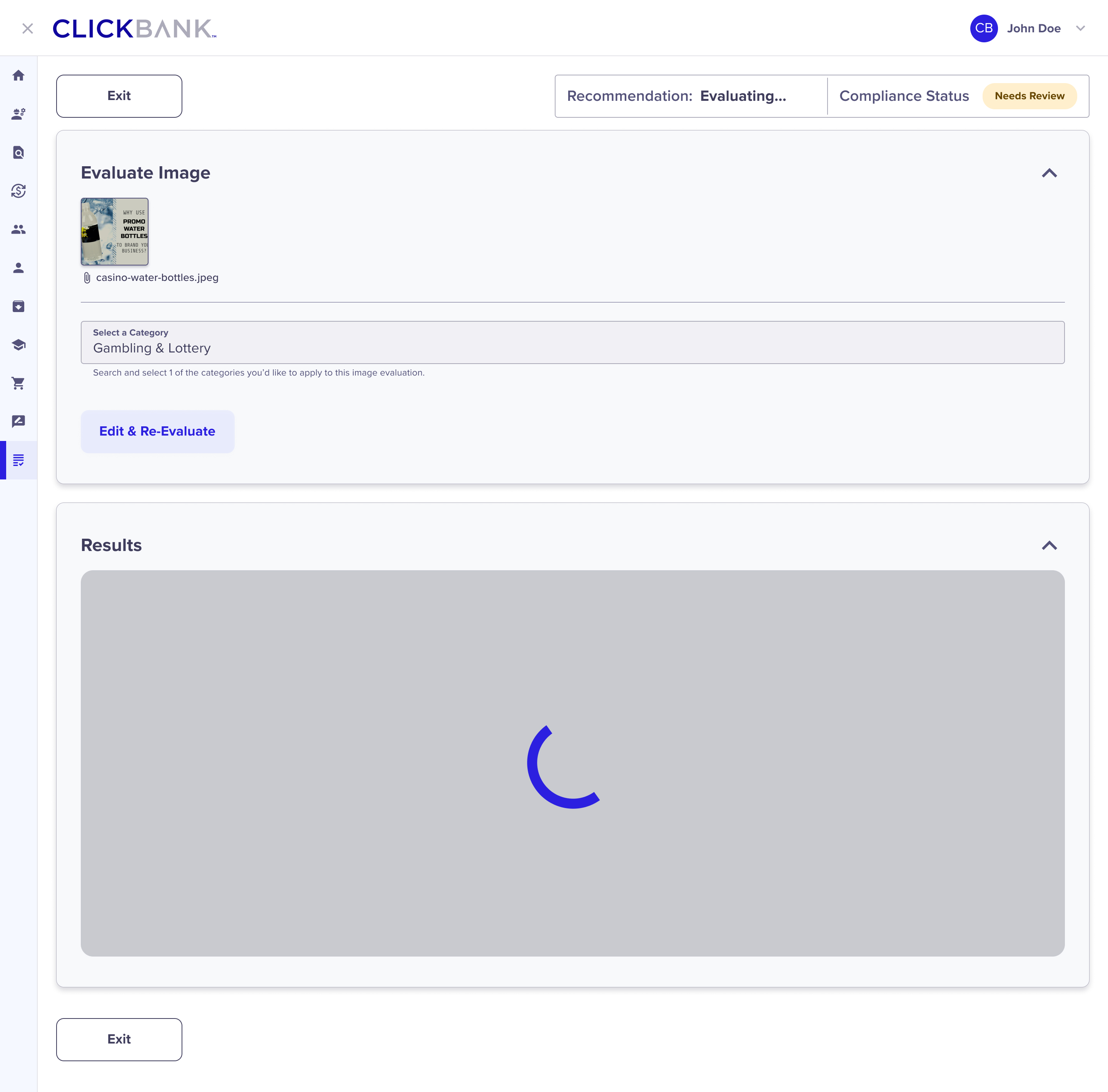

The platform allowed compliance reviewers to scan storefronts and site images, where the LLMs (Claude / Textract / Rekognition):

Identified and surfaced all claims made by the seller.

Evaluated claims against ClickBank policy.

Flagged non-compliant language with clear explanations.

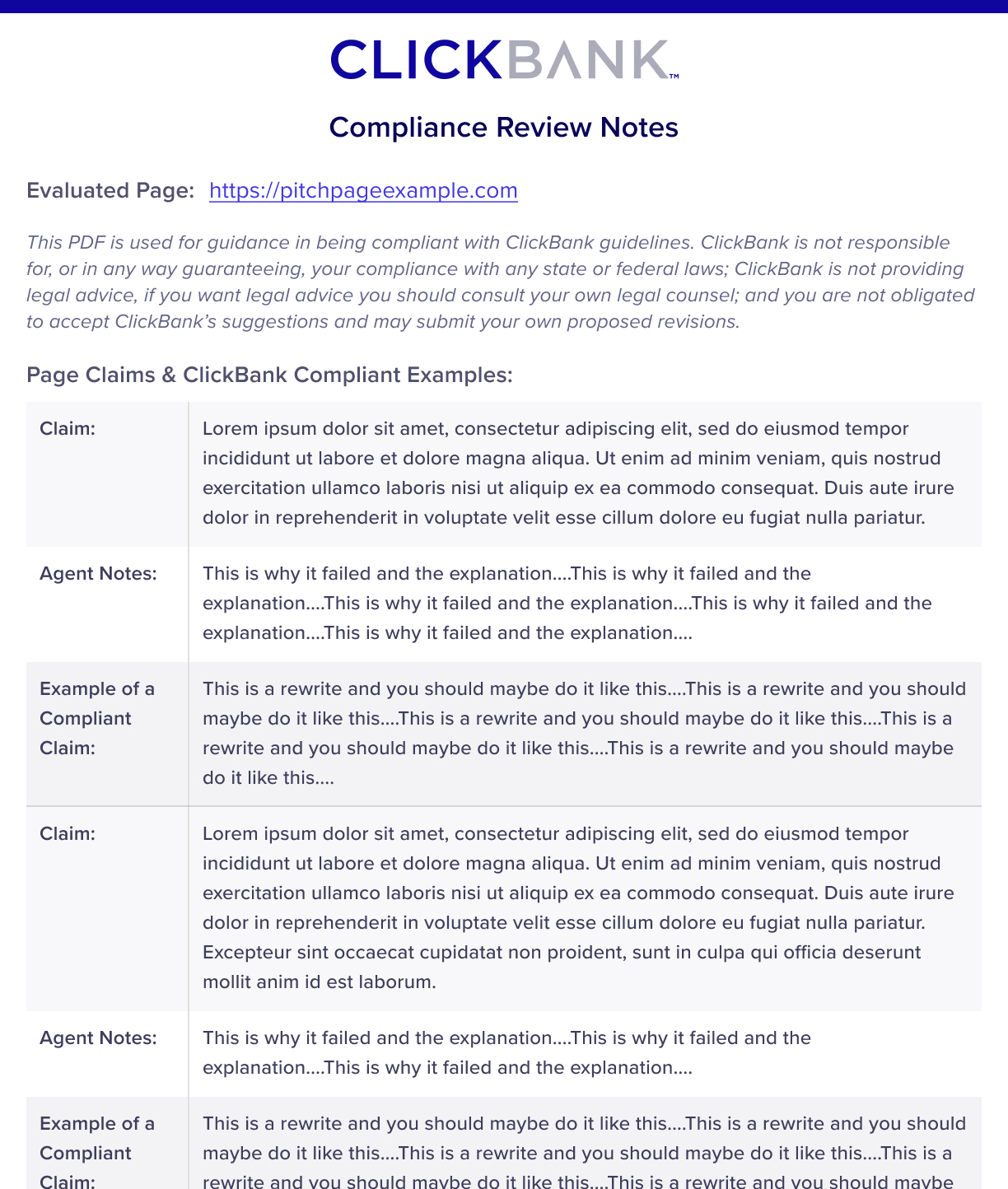

When a submission failed, the system automatically generated a PDF outlining why it did not pass, creating consistency and saving manual documentation time.

The LLMs also suggested policy-compliant rewrites, which internal reviewers could edit or approve—ensuring accuracy while dramatically reducing effort.

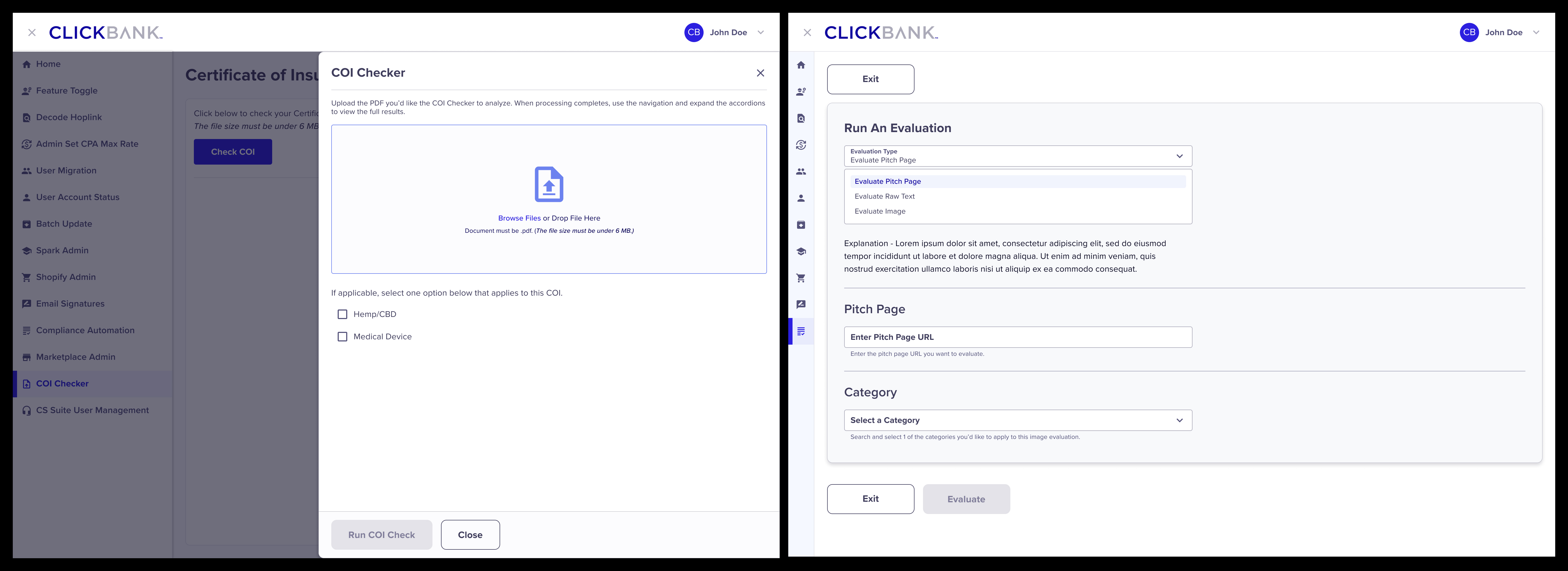

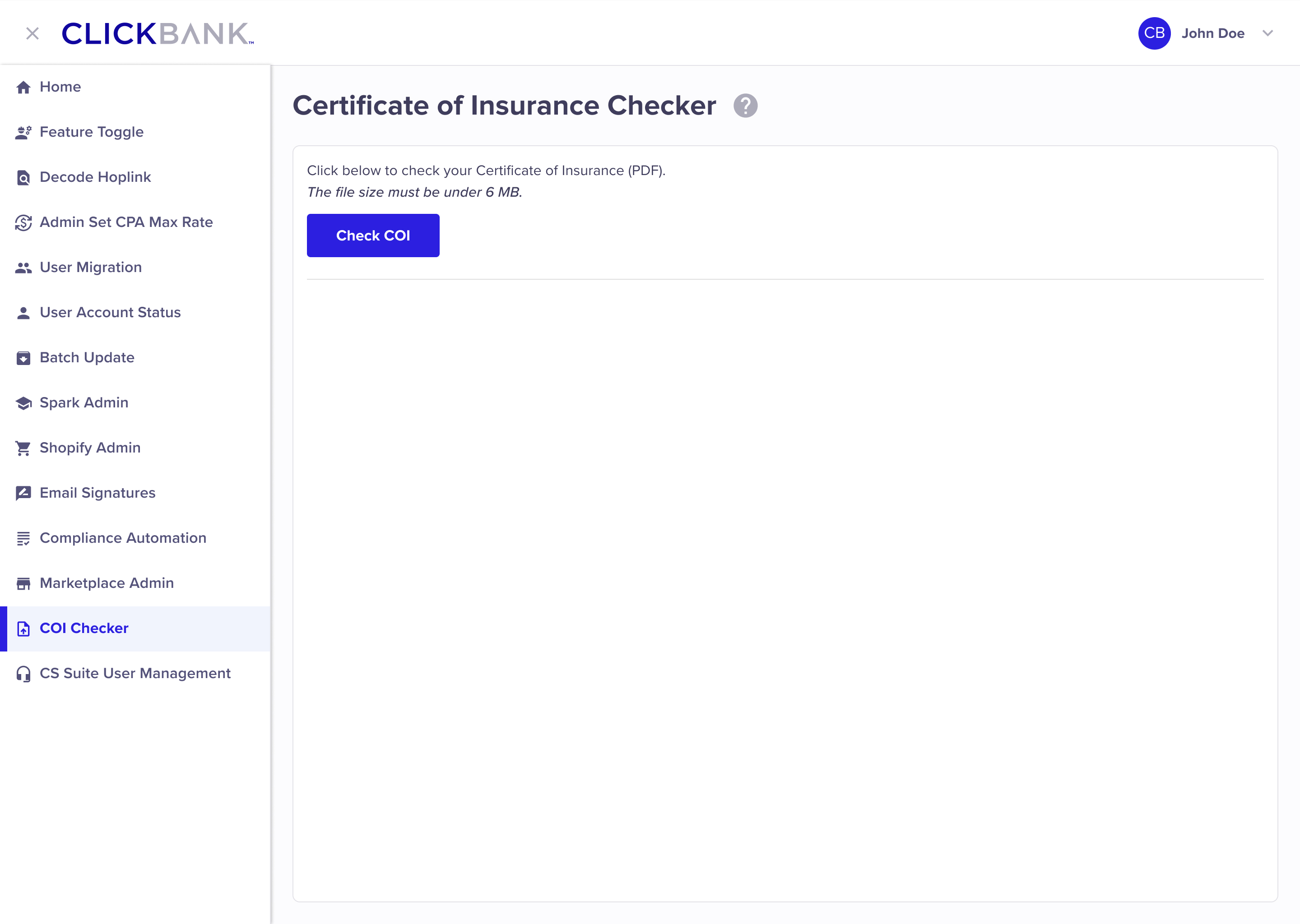

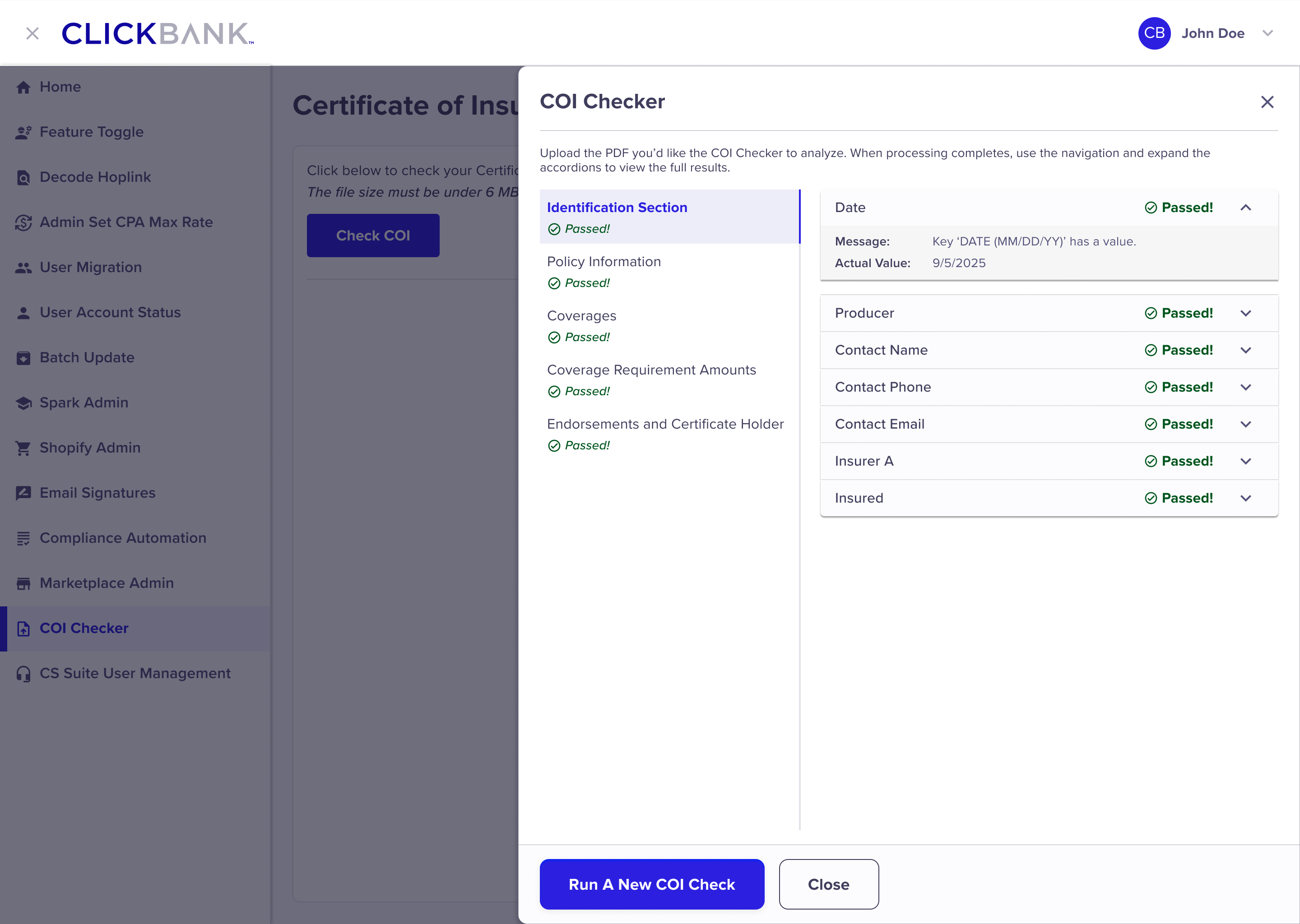

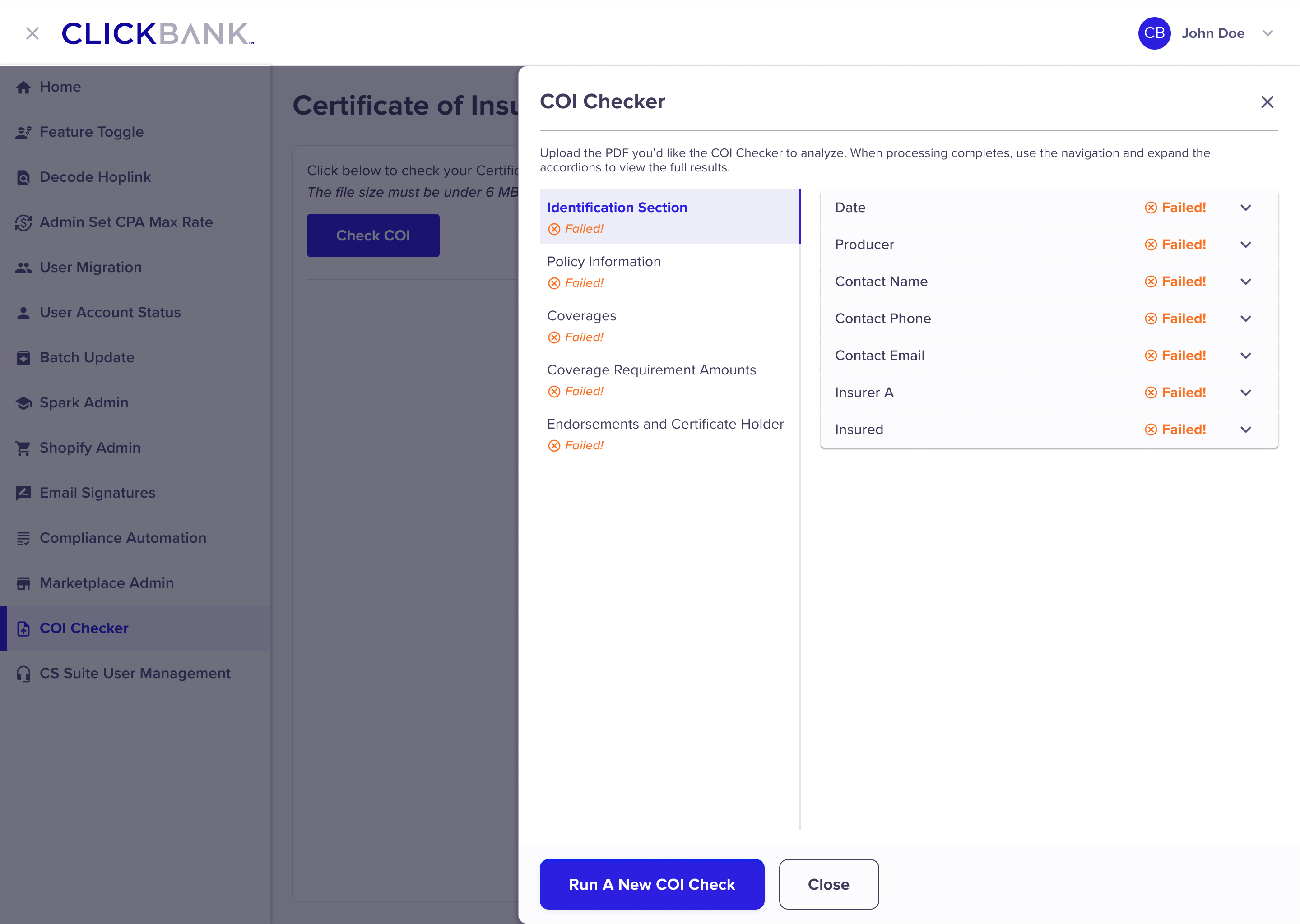

For certificates of insurance and product labels, reviewers could upload documents directly into the platform. The LLM:

Validated the presence of required fields.

Flagged missing information (e.g., expiration dates, id numbers, etc).

Clearly explained why a document failed and what was required to pass.

This turned previously unclear rejections into actionable feedback for both internal teams and sellers.

The introduction of AI into the compliance workflow significantly transformed how reviews were performed. Review cycles were reduced from days to minutes, enabling the team to handle increased volume without additional staffing. By standardizing evaluations across storefronts, claims, images, and documentation, the platform improved consistency and trust at scale while keeping reviewers firmly in control of final decisions. Auto-generated PDFs and AI-suggested rewrites elevated feedback quality, helping sellers clearly understand why submissions failed and how to correct them. Most importantly, by allowing reviewers to train, edit, and guide the LLM, the system supported human-centered AI adoption, building internal confidence and continuously improving accuracy over time.

Collaboration Over Automation: AI delivered the most value when positioned as a partner rather than a replacement. By assisting with extraction, evaluation, and draft recommendations. Leaving the final judgment to users, and the system simplified the work without removing user accountability or expertise.

Trust Is Designed, Not Assumed: In high-risk, policy-driven workflows, explainability and human override aren’t optional...they’re foundational. Making AI reasoning visible and editable was essential to building confidence, ensuring accuracy, and enabling responsible adoption at scale.

Learning Through Use: Allowing reviewers to edit, correct, and guide the LLM turned real-world usage into continuous training. Over time, this feedback loop improved accuracy, reduced friction, and strengthened trust between users and the system.

This project demonstrated how thoughtful design can move AI beyond experimentation into a dependable source of leverage. One that helps teams scale, brings clarity and consistency to complex decisions, and strengthens user expertise rather than replacing it.